It is easy to say that speed thrills web users, but altogether it kills the developers.

Website developers have a lot on their hands because people belittles websites with poor load time. Loading time is one of the critical factors that weigh website performance.

Decades back, people used to glue to their television sets for updates around the world. With computer and internet, things changed a bit, like the news hour changed from family time to private one. But now with Smartphones, things have changed drastically. People move with wheels and prefer whatever information they are seeking to be available at a lightning speed.

I said earlier that people look down on websites with slow loading time because it lets them down in terms of user experience. There is a good reason actually. Today we can find hundreds and millions of websites around the world, selling same products, providing identical services, so people do have a lot of options you see. And when they find that certain website is consuming too much of their precious time they switch.

Too much time as in the ‘LOADING TIME’.

Web developers often get preached about designing a website for users and not search engines. But how would they understand the audience? I mean, we have millions of them, right?

Also, it is said that web users do not stay on a website that takes more than 3 seconds to load.

Beyond question, there are a lot of factors that affect the speed of a website. Sometimes bad web design practices can altogether affect the parameter that we are speaking of.

In this blog site, we are going to look into speed optimizing techniques that should be implemented by every website developer.

Website Loading Time: Does it Affect SEO and the Bottom Line?

I don’t know about web users, but PageSpeed definitely matters to Google.

It was the year 2010 I guess when Google announced PageSpeed as one of the ranking signals.

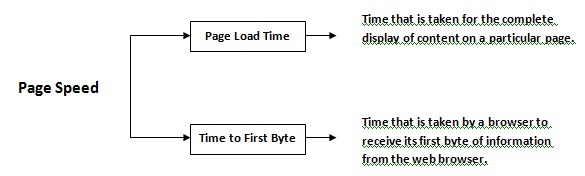

PageSpeed, have you heard of this term?

‘It is the measurement of how fast the content of a web page takes to load.’

And it can be described in either way,

When your web page has longer loading time, the search engines will crawl only a few pages, this tends to have a negative influence on the search engine ranking.

Also, people tend to skip websites with longer loading time, this increases bounce rates and altogether affects the bottom line.

Website Speed Optimizing Techniques that Should be Followed

METHOD 1: Switch to HTTP/2 Protocol

I could have conventionally told you to reduce the number of HTTP requests used, but let’s not waste our time to reason such trivial things, rather let’s concentrate on the new protocol.

Yes, HTTP/2 is the next new version of HTTP after HTTP 1.1.

The web’s fundamental protocol is finally able to address multiple requests.

Under HTTP 1.1 protocol only one request was permitted per TCP connection, this spiked the number of HTTP requests, thus increasing the amount of bandwidth that was required.

Also, the data was transferred through not so compact four text message style, which not only increased the time required for processing but added the number of round trips.

HTTP/2, on the other hand, supported multiplexing (against HTTP1’s head of line blocking and uncompressed headers) where multiple requests are supported at the same time.

The application semantics of HTTP remains unaltered and even other core concepts such as HTTP methods, status codes, URIs, and header fields remain the same. The only issue addressed here is how the messages are framed and transmitted between the client and server.

HTTP/2 includes server push, which supports faster data transmission by working against the conventional request and response pattern.

Basically, when a user sends a request, he has to wait until the browser retrieves the critical assets. But the server push feature will push all the data needed by a webpage and together in parallel the browser will load the CSS, JavaScript, and image files through a single network.

The approach is compact and allows the website to load faster.

METHOD 2: Use Content Delivery Network (CDN)

What are the benefits of cache memory?

I know the question is irrelevant to about what is expected of here, but trust me there is a relation.

CPU to access the frequently used data more quickly stores them a cache memory.

CDN is similar to a cache memory. Web server instead of accessing information from the actual server will access the same from CDN to reduce the loading time.

CDNs functions as similar to the cache memory.

Data (CSS stylesheets, JavaScript, videos, and images) that does not change in response to user’s actions or inputs are static content.

Now, these CDNs act as cached station and store static content. When the web viewer requests for information, the data will be accessed from the nearest CDN server, which results in optimized website performance.

METHOD 3: Use Brotli for reducing Bandwidth Usage

An open source, lossless data compression algorithm, Brotli was introduced by Google to reduce the total bandwidth consumption and improve the content load time.

The algorithm is able to support larger compressed files as it utilizes Huffman coding and a variant of the LZ77 algorithm. Brotli is an advantage against GZIP as the former offers 20-26% better compression ratio.

METHOD 4: Minify CSS and JS Files

Minification indicates toward removing redundant code in order to reduce load times and bandwidth usage on the websites.

Minification involves,

Removing line breaks, white spaces, extra semi-colons, comments, and reducing the hex code lengths. Programmers tend to use these things to make the code more readable, while it’s a good practice for developers, but not for web servers.

Minification is considered one of the standard practices for page optimization.

A small input,

Listed here are the minifiers that you can use for minifying your resources (HTML, CSS, and JavaScript)

- For HTML, try HTMLMinifier

- For CSS, try CSSNano and csso

- For JavaScript, try UglifyJS

Call Adroitte

Contact us to discuss your NGO related website design requirement. Call us today on +917760487777 or 08041127377 or message us on our contact form and we will reply back ASAP. We can discuss how we can strategically implement NGO website design successfully for your organization.